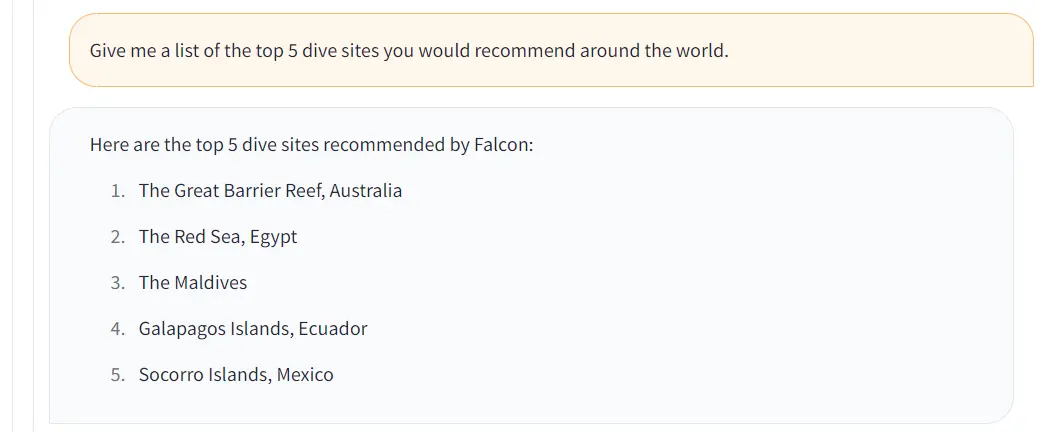

Fine-Tuning Tutorial: Falcon-7b LLM To A General Purpose Chatbot

4.7 (176) In stock

4.7 (176) In stock

Step by step hands-on tutorial to fine-tune a falcon-7 model using a open assistant dataset to make a general purpose chatbot. A complete guide to fine tuning llms

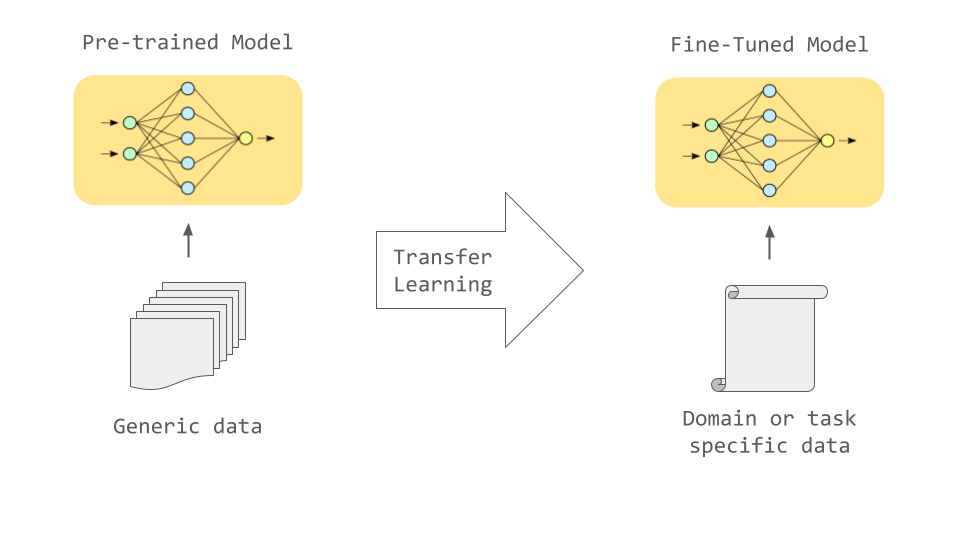

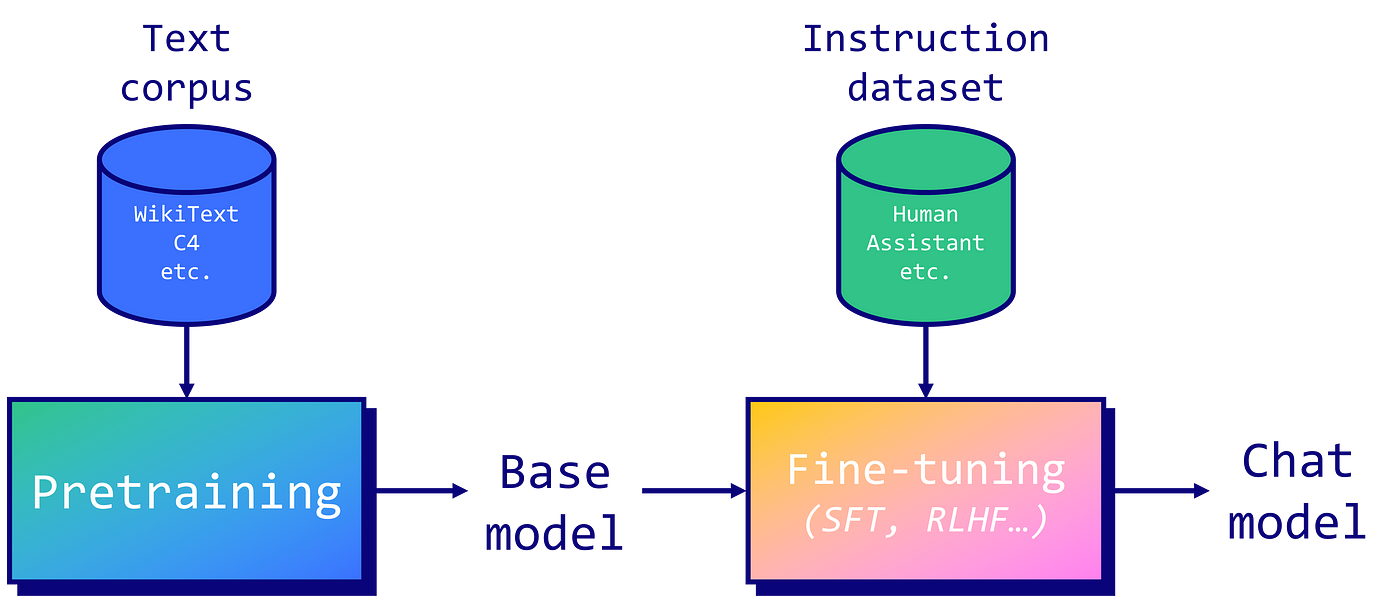

LLM models undergo training on extensive text data sets, equipping them to grasp human language in depth and context.

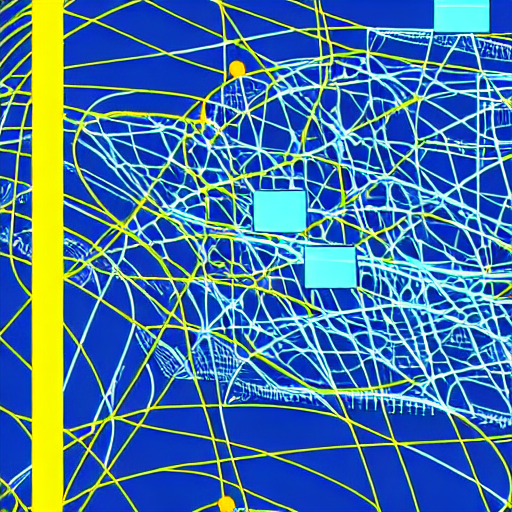

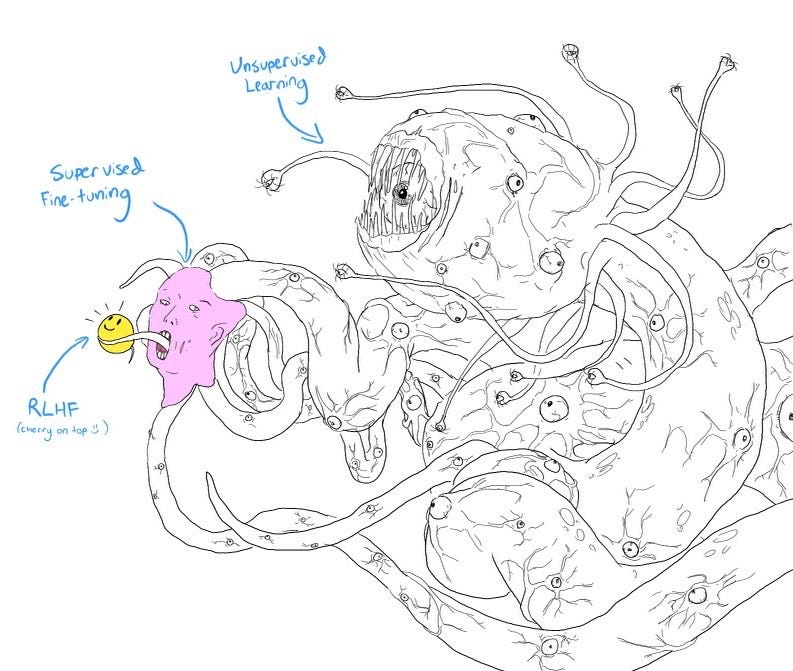

In the past, most models underwent training using the supervised method, where input features and corresponding labels were fed. In contrast, LLMs take a different route by undergoing unsupervised learning.

In this process, they consume vast volumes of text data devoid of any labels or explicit instructions. Consequently, LLMs efficiently learn the significance and interconnect

Fine-Tuning the Falcon LLM 7-Billion Parameter Model on Intel

Comparing the Best Open-Source Large Language Models

Large Language Models: Fine-tuning MPT-7B on Paperspace

Models We Love: June 2023

Finetuning an LLM: RLHF and alternatives (Part I), by Jose J. Martinez, MantisNLP

Fine-Tuning Tutorial: Falcon-7b LLM To A General Purpose Chatbot

Explore informative blogs about large language model

Fine-Tune Your Own Llama 2 Model in a Colab Notebook

Falcon LLMs: In-depth Tutorial. tutorial

Fine-Tuning Tutorial: Falcon-7b LLM To A General Purpose Chatbot