DistributedDataParallel non-floating point dtype parameter with requires_grad=False · Issue #32018 · pytorch/pytorch · GitHub

4.5 (265) In stock

4.5 (265) In stock

🐛 Bug Using DistributedDataParallel on a model that has at-least one non-floating point dtype parameter with requires_grad=False with a WORLD_SIZE <= nGPUs/2 on the machine results in an error "Only Tensors of floating point dtype can re

Issue for DataParallel · Issue #8637 · pytorch/pytorch · GitHub

Distributed] `Invalid scalar type` when `dist.scatter()` boolean

Error using DDP for parameters that do not need to update

If a module passed to DistributedDataParallel has no parameter

RuntimeError: Only Tensors of floating point dtype can require

Wrong gradients when using DistributedDataParallel and autograd

Torch 2.1 compile + FSDP (mixed precision) + LlamaForCausalLM

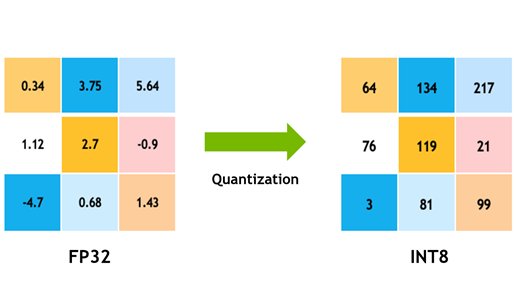

Achieving FP32 Accuracy for INT8 Inference Using Quantization

Torch 2.1 compile + FSDP (mixed precision) + LlamaForCausalLM

torch.distributed.barrier Bug with pytorch 2.0 and Backend=NCCL

Issue for DataParallel · Issue #8637 · pytorch/pytorch · GitHub

Wrong gradients when using DistributedDataParallel and autograd